Skip the fork

Automatically patching python dependencies with a hatch build hook

Have you ever found the perfect Python package, only to discover it hasn’t been updated in years? It almost works, but not quite, so you patch a few lines or bump a dependency… and suddenly you’re debating whether to maintain a full fork.

In many cases, a fork is more trouble than it’s worth. You’re now responsible for long-term maintenance, duplicated CI, organizational policy headaches and storage overhead. When all you need is a tiny tweak, there’s a more lightweight approach.

Fetch the package directly from its git source, apply a patch to the code, and install the modified version.

We can do all of this directly within the python packaging ecosystem. But before we do, we need to understand how python packages and their dependencies get installed, and how we can modify it to suit our needs.

A 10,000 foot view of the Python Packaging Ecosystem

Before 2017, the python packaging ecosystem was dominated by two tools - distutils, and setuptools which extended distutils. However, there were two main problems:

-

They didn’t have all the features that users wanted, and extending them was difficult. Existing solutions were brittle and hard to maintain.

-

Using another packaging tool was not an option because distutils/setuptools provided the standard interface for installing packages expected by both users and installation tools like pip.

The solution was a single standardised interface for package installation tools to interact with build tools, proposed in PEP 517. This allowed the ecosystem to flourish, paving the way for new tools like uv and hatch.

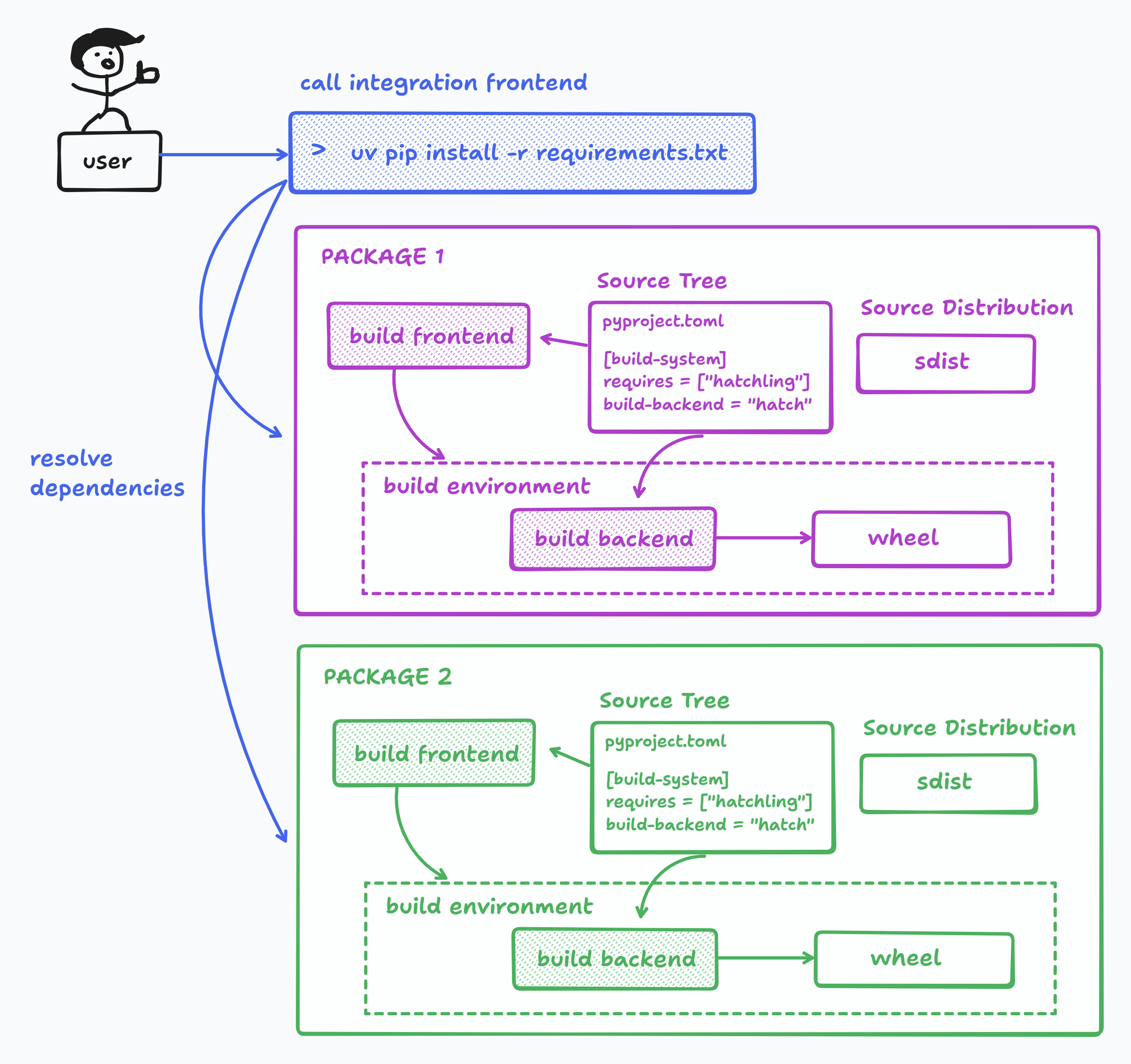

PEP517 introduced 3 new actors:

-

Integration Frontend: takes a set of package requirements (e.g. a requirements.txt file) and attempts to update a working environment to satisfy those requirements.

-

Build Backend: Takes a source tree or sdist and builds wheels from them.

-

Build Frontend: takes arbitrary source trees or source distributions, calls the build backend specified in the pyproject.toml of each source tree.

And this is how they work together to install a package:

-

The integration frontend reads

pyproject.tomland gathers a list of required dependencies and solves their version constraints. If a compatible wheel is found, the integration frontend downloads and installs it. -

If no wheel is found, then a wheel must be built. The integration frontend calls the build frontend, which in turn uses the build backend configured in pyproject.toml to build a wheel. A typical configuration might look like this:

[build-system]

requires = ["setuptools>=61", "wheel"]

build-backend = "setuptools.build_meta"

The build process has the following steps:

-

The build frontend creates an isolated build environment, which is usually an ephemeral virtualenv separate from the user environment. However some frontends like

uvlet you use the user env to build (see--no-build-isolation). -

The build frontend installs the packages listed in

build-system.requiresinto the build environment. -

The frontend imports the build backend specified in

build-backend, and calls a set of well known methods to execute the build.

PEP517 mandated that all build backends must implement a set of well-defined hooks to allow the frontend to call them. This allowed the user to swap out different backends or frontends without breaking the build process. The two mandatory hooks are:

def build_wheel(wheel_directory, config_settings=None, metadata_directory=None):

"""

Must build a .whl file, and place it in the specified wheel_directory.

It must return the basename (not the full path) of the .whl file it creates,

as a unicode string.

"""

def build_sdist(sdist_directory, config_settings=None):

"""

Must build a .tar.gz source distribution and place it in the specified

sdist_directory. It must return the basename (not the full path) of the

.tar.gz file it creates, as a unicode string.

"""

Open The Hatch

Back to our original problem. How do we automatically install a modified source tree without forking the source repo?

Enter Hatch - a modern and extensible build backend, which provides a MetadataHookInterface that lets you dynamically modify the [project] metadata in pyproject.toml during the build process.

That means we can write a hook which:

- Downloads the original repo to a local directory

- Applies a set of patches to the directory to produce the modified source tree

- Injects the file path of our modified source tree into the

project.dependenciestable ofpyproject.toml. - The integration frontend will work as described in the previous section to resolve and install all the requirements in

pyproject.toml

Creating the Metadata Hook

Now for a concrete example. I’ve chosen the together-ai plugin (wearedevx/llm-together) for Simon Willison’s llm cli tool. A recent API change broke the functionality, which another user has patched in a fork (hcbraun/llm-together).

First, we need to generate a diff of the changes we need to make, and save it as a diff file.

NOTE: You can generate a diff between any two commits in github, even if they exist in different repos. The url pattern is:

https://github.com/{ORG_1_NAME}/{REPO_1_NAME}/compare/${REPO_1_COMMIT}...{ORG_2_NAME}:{REPO_2_NAME}:{REPO_2_COMMIT}.diff

This gives us a git compatible diff file which we can apply in our hook:

ORIGINAL_REPO_COMMIT="1652d0df7820d9c6f83fa7460504272308c96c84"

FORKED_REPO_COMMIT="f7ef62a73a0051c3ea23fe1cb373c617e4120e58"

wget -O llm-together.patch https://github.com/wearedevx/llm-together/compare/${ORIGINAL_REPO_COMMIT}...hcbraun:llm-together:${FORKED_REPO_COMMIT}.diff

Next, we need to configure our pyproject.toml file to specify the build backend:

[build-system]

requires = ["hatchling>=1.5.0"]

build-backend = "hatchling.build"

Next, we have a choice of either creating a new hatch plugin or using the in-built custom plugin.

If creating a new plugin, it must be declared in pyproject.toml like this:

[tool.hatch.build.hooks.<PLUGIN_NAME>]

path = "path/to/PLUGIN_NAME/file"

Then, in the plugin file, the hook must be registered to the plugin:

from hatchling.plugin import hookimpl

from hatchling.metadata.plugin.interface import MetadataHookInterface

class PatchBuildHook(MetadataHookInterface):

# TODO: Implement this

pass

@hookimpl

def hatch_register_metadata_hook():

return PatchBuildHook

However, hatch also has a built-in plugin called custom which is registered implicitly. It looks for build hooks in a build_hooks.py file in the package root directory, but an alternate file can also be specified using the path option. Let’s use this to minimize complexity:

[tool.hatch.build.hooks.custom]

path = "path/to/metadata/hook/file"

We can also specify optional configuration options:

[tool.hatch.build.hooks.custom]

path = "build_hooks.py"

git_url = "https://github.com/wearedevx/llm-together.git"

git_revision = "1652d0df7820d9c6f83fa7460504272308c96c84"

patch_file = "llm-together.patch"

We now have everything we need to write our metadata hook, which only needs to satisfy the following signature:

from hatchling.metadata.plugin.interface import MetadataHookInterface

class PatchBuildHook(MetadataHookInterface):

def update(self, metadata: dict) -> None:

pass

The update function provides a dictionary of all tables in the pyproject.toml file. Any modifications to this dict will persist throughout the rest of the build process.

Any extra options specified in the pyproject.toml for [tool.hatch.build.hooks.custom] will be available through the self.config dict of MetadataHookInterface.

Now we can start fleshing out the hook. We’ll start by defining the update function and verifying that the user has specified all of the required config fields. We’re also going to initialize a cache to store the remote source code so that we don’t have to download it each time.

import shutil

import subprocess

from pathlib import Path

import appdirs

from hatchling.metadata.plugin.interface import MetadataHookInterface

class PatchBuildHook(MetadataHookInterface):

def update(self, metadata: dict) -> None:

cache_dir = Path(appdirs.user_cache_dir("myapp"))

cache_dir.mkdir(parents=True, exist_ok=True)

if not (patch_file := self.config.get("patch_file")):

raise ValueError("Missing patch_file in pyproject.toml for [tool.hatch.build.hooks.custom]")

if not (git_url := self.config.get("git_url")):

raise ValueError("Missing git_url in pyproject.toml for [tool.hatch.build.hooks.custom]")

if not (git_rev := self.config.get("git_revision")):

raise ValueError("Missing git_revision in pyproject.toml for [tool.hatch.build.hooks.custom]")

Next we check if the cache contains the source code at the revision specified by the user. If not, we perform a shallow git clone, and switch to the specified revision.

class PatchBuildHook(MetadataHookInterface):

...

def download_source(self, git_url: str, git_rev: str, target_path: Path):

if target_path.exists():

shutil.rmtree(target_path)

target_path.mkdir(parents=True, exist_ok=True)

subprocess.run(["git", "clone", git_url, str(target_path)], check=True)

subprocess.run(["git", "checkout", git_rev], cwd=target_path, check=True)

def is_source_ready(self, path: Path, git_rev: str) -> bool:

"""Return True if path exists and HEAD matches git_rev"""

if not (path / ".git").is_dir():

return False

try:

current = subprocess.run(

["git", "rev-parse", "HEAD"],

cwd=path,

text=True,

capture_output=True,

check=True,

).stdout.strip()

except Exception:

return False

def update(self, metadata: dict):

...

# Check if we need to download/update the source

source_dir = cache_dir / f"source_{git_rev}"

if not self.is_source_ready(source_dir, git_rev):

self.download_source(git_url, git_rev, source_dir)

And now we can apply the patch to the downloaded source repo. To make it idempotent, we get rid of any uncommitted code changes before applying the patch:

class PatchBuildHook(MetadataHookInterface):

...

def apply_patch(self, source_dir: Path, patch_path: Path) -> None:

# blow away uncommitted changes

subprocess.run(

["git", "checkout", "--", "."],

cwd=source_dir,

check=True

)

subprocess.run(

["git", "apply", str(patch_path)],

cwd=source_dir,

check=true

)

def update(self, metadata: dict):

...

# Check if we need to download/update the source

source_dir = cache_dir / f"source_{git_rev}"

if not self.is_source_ready(source_dir, git_rev):

self.download_source(git_url, git_rev, source_dir)

self.apply_patch(source_dir, Path(self.root) / patch_file)

And the final step is to provide a filepath dependency specification to the metadata table:

def update(self, metadata: dict):

...

dep_spec = f"llm-together@file://{source_dir}"

if dep_spec not in metadata.get("dependencies", []):

metadata.setdefault("dependencies", []).append(dep_spec)

super().update(metadata)

And there you have it! A simple way to manage modifications for upstream python dependencies. You can find the complete code at